Evaluation of 2D-/3D-Feet-Detection Methods for Semi-Autonomous Powered Wheelchair Navigation

Mid Sweden University

Holmgatan 10, 85170 Sundsvall, Sweden

IMMS Institut für Mikroelektronik- und Mechatronik-Systeme Gemeinnützige GmbH

Ehrenbergstraße 27, 98693 Ilmenau, Germany

Faculty of Science

University of Ontario Institute of Technology

2000 Simcoe St. N., Oshawa ON L1G 0C5, Canada

Abstract

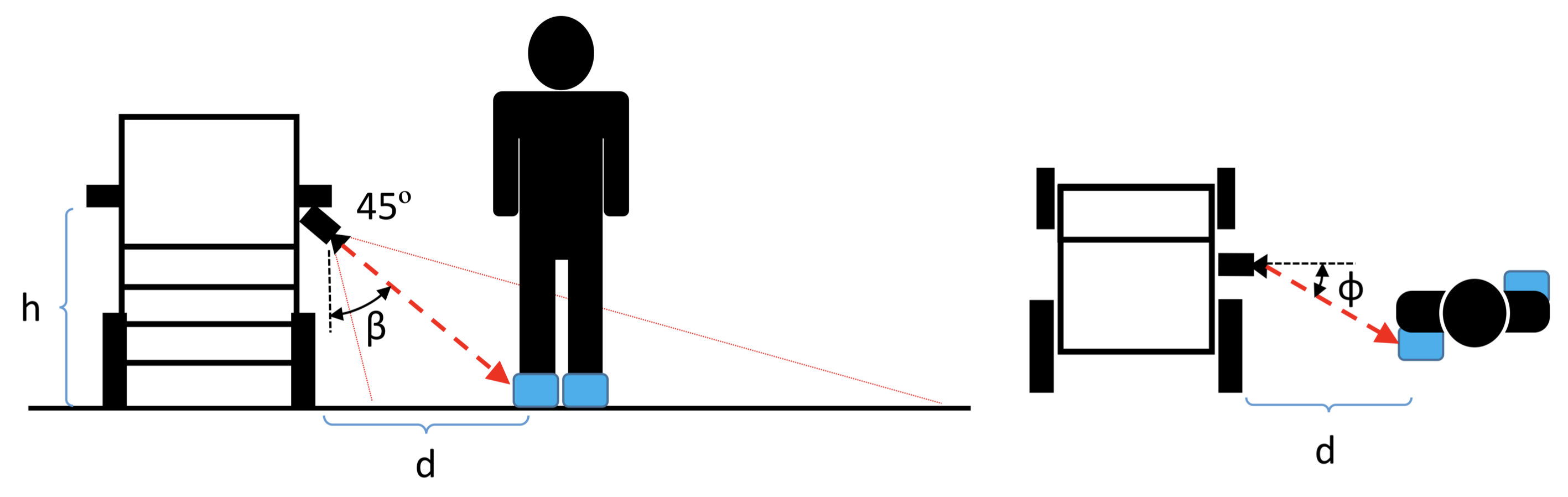

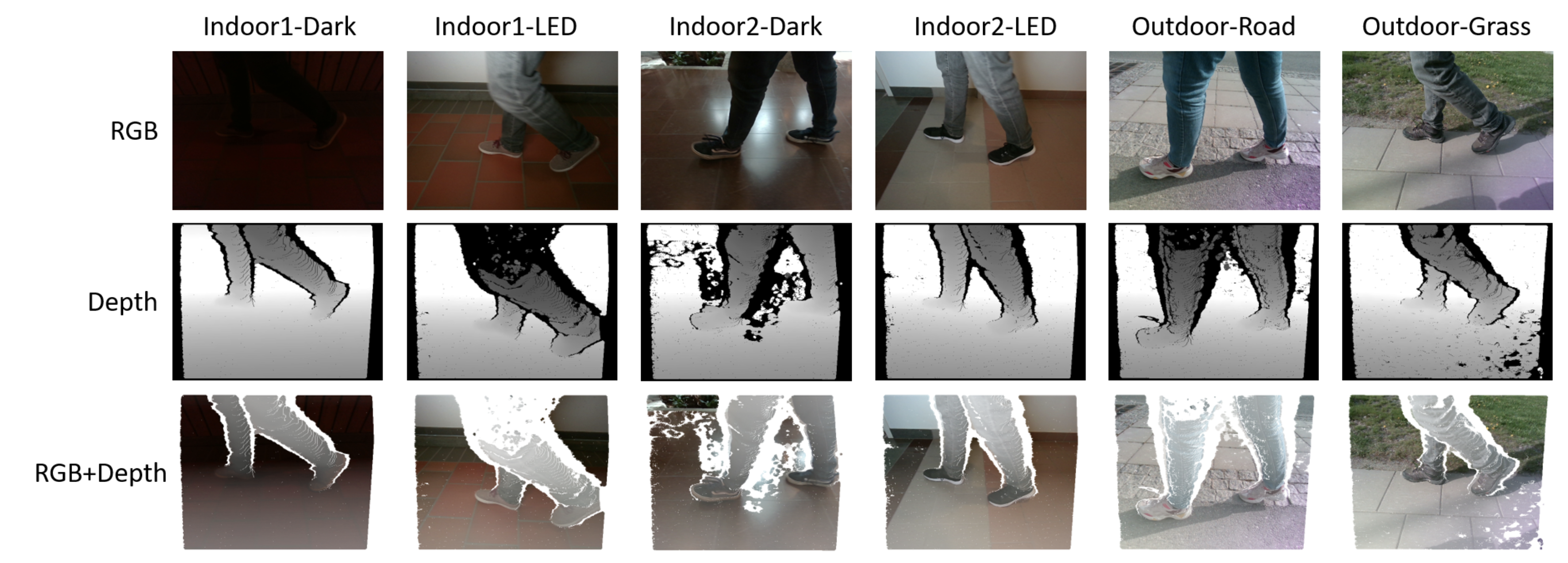

Powered wheelchairs have enhanced the mobility and quality of life of people with special needs. The next step in the development of powered wheelchairs is to incorporate sensors and electronic systems for new control applications and capabilities to improve their usability and the safety of their operation, such as obstacle avoidance or autonomous driving. However, autonomous powered wheelchairs require safe navigation in different environments and scenarios, making their development complex. In our research, we propose, instead, to develop contactless control for powered wheelchairs where the position of the caregiver is used as a control reference. Hence, we used a depth camera to recognize the caregiver and measure at the same time their relative distance from the powered wheelchair. In this paper, we compared two different approaches for real-time object recognition using a 3DHOG hand-crafted object descriptor based on a 3D extension of the histogram of oriented gradients (HOG) and a convolutional neural network based on YOLOv4-Tiny. To evaluate both approaches, we constructed Miun-Feet—a custom dataset of images of labeled caregiver’s feet in different scenarios, with backgrounds, objects, and lighting conditions. The experimental results showed that the YOLOv4-Tiny approach outperformed 3DHOG in all the analyzed cases. In addition, the results showed that the recognition accuracy was not improved using the depth channel, enabling the use of a monocular RGB camera only instead of a depth camera and reducing the computational cost and heat dissipation limitations. Hence, the paper proposes an additional method to compute the caregiver’s distance and angle from the Powered Wheelchair (PW) using only the RGB data. This work shows that it is feasible to use the location of the caregiver’s feet as a control signal for the control of a powered wheelchair and that it is possible to use a monocular RGB camera to compute their relative positions.

Synopsis

This work is part of a larger effort at developing a powered wheelchair that autonomously follows a caregiver. RGB cameras mounted on the wheelchair identify and localize caregiver feet. We plan to use the location and heading of the feet are used as the control signal to locomote the wheelchair next to the caregiver. This work shows that it is possible to estimate the location and direction of caregiver feet (using a wheelchair mounted RGB camera) with sufficient accuracy to design a controller for moving the wheelchair next to the caregiver.

Dataset

The datasets is available at https://snd.gu.se/sv/catalogue/study/2021-303/1/1#dataset.

Publication

For technical details please look at the following publications